Have you ever spent all day staring at a computer screen and then looked outside at a bright sunny day? If so, you may have noticed how bright the highlights of the real world are compared with the brightest “white” produced by your monitor. The same is true for your television, phone, and tablet screens – but all of that is starting to change thanks to increasing innovation in both video camera and display technology called High Dynamic Range (HDR).

To generalize, HDR video brings more color, a wider range of brightness and an overall crisper and clearer image. It’s not possible to “show” HDR’s capabilities, unless you are viewing HDR content on an HDR screen, technology that isn’t yet ubiquitous enough. So we’re here to help explain what HDR is, how it works and why anyone who produces or consumes media should care.

The Long Road to HDR

Another way to begin to understand HDR, is through understanding the limitations of older technology. Long story short, the main reason we haven’t been using HDR until recently has to do with old-school video technology. Cathode Ray Tubes (CRT) used in early television sets created the original display standard. Subsequent government regulation required use of the same television system technology to ensure universal compatibility. As a result, today’s digital broadcast standards are still limited in part by what CRTs could do in the 1940s and 50s. And even once digital came along, RGB color standards used in computer screens were also based around similar CRT capabilities.

HDR Photography vs. HDR Video

You may have heard HDR in association with still photography. Even new Iphones have an HDR setting, right? Well, that type of HDR is slightly different. While both HDR video and HDR photography both result in images that have a greater contrast between light and dark, the way they achieve that is different. High-end cameras and recent smartphone apps utilize HDR by combining several shots of varying exposure taken during a single burst. The resulting image combines these multiple shots, creating an exceptionally bright and vibrant final image.

HDR Defined

After living with very limited displays in terms of brightness and color, we’ve finally started seeing displays that come closer to achieving the range of light and color that the eye can see. How is this done? The following four factors define HDR:

1) Color Space

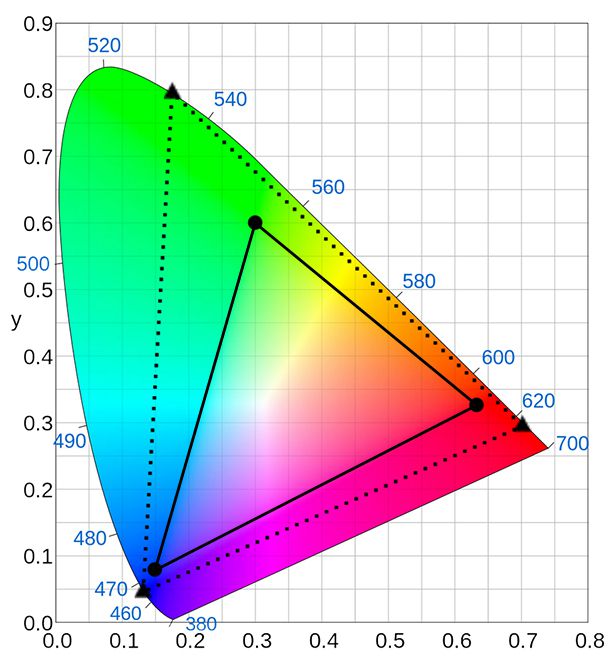

HDR uses an extension of BT.2020 UHD/FUHD and DCI specifications. These use pure wavelength primaries, instead primary values based on the light emissions of CRT phosphors or other material. This basically means HDR displays a broader range of colors. The chart below aims to show the difference in color space between Rec. 709, the older color standard with a solid line vs. the proposed Rec. 2020 color gamut, marked by the dotted line. The catch is, we can’t see the exact colors through our current monitors, only laser projectors can display the full range.

Source: Geoffrey Morrison/CNET (triangles); Sakurambo (base chart)

2) Transfer Curves

Another requirement for HDR video is related to transfer curves which is the mapping from scene light captured by a camera to displayed light. Brightness measured in what’s known as a “nit,” another way to describe a brightness of 1 candela per square meter (cd/m2). What is a candela? As its name suggests, an average candle (think birthday candle) produces roughly 1 candela.

Again, display brightness was previously defined by what was possible under CRT technology. CRTs could make fairly dark blacks, their top end was generally limited 100 nits. Today’s (non-HDR) high-end LCDs can reach around 400 nits. HDR has the potential to blow past these limitations using one of two new transfer curves: the BBC’s Hybrid Log Gamma (HLG), standardized in ARIB STD-B67, which allows for output brightness levels from 0.01 nit up to around 5,000 nits, and Dolby’s Perceptual Quantization (PQ) curve, standardized in SMPTE ST.2084, which allows for output brightness levels from 0.0001 nit up to 10,000 nits.

3) Bit Depth

As the transfer curves and display brightness has increased, as described above, the problem of seeing a noticeable jump between light values has increased. This visible demarcation between two tones is called “stepping.” To avoid this problem, HDR requires a minimum 10 bit rendering at the display panel itself.

4) Metadata

The last thing that HDR video requires that standard BT.2020 doesn’t, is metadata. All forms of HDR video should include information about the content which can be adapted by a screen or display in real time. This metadata can be static or dynamic. Dynamic metadata allows a compatible display to adapt the content as needed, in particular when the content exceeds the capability of the playback display.

Current HDR technology is still not able to recreate the detail and range that our eyes can perceive, but it’s getting closer. And, HDR is about to completely raise our standards when viewing films and imagery across all screens moving forward. To help educate the Nimia community, we’re expanding this post into a series on HDR. So stay tuned to our blog for additional posts, including how to shoot for HDR and how to grade and deliver HDR files.

Also, while we’re just brushing the surface in terms of what you can read and learn about HDR, there are several great resources out there that provide even more in-depth information. In particular, Mystery Box’s five part series has even more explanation and information for those who want to go deep.